studio.heelab

[DL for CV] Image Classification with Linear Classifiers 본문

Lecture: https://www.youtube.com/watch?v=pdqofxJeBN8&list=PLoROMvodv4rOmsNzYBMe0gJY2XS8AQg16&index=2

1. Image Classification

The problem: Semantic Gap

An image is a tensor of integers between [0,255]

Challenges

-Viewpoint variation

-Illumination

-Background Clutter

-Occlusion

-Deformation

-Intraclass variation

An image classifier

def classify_image(image):

#Some magic here:

return class_label

Find edges -> Find corners

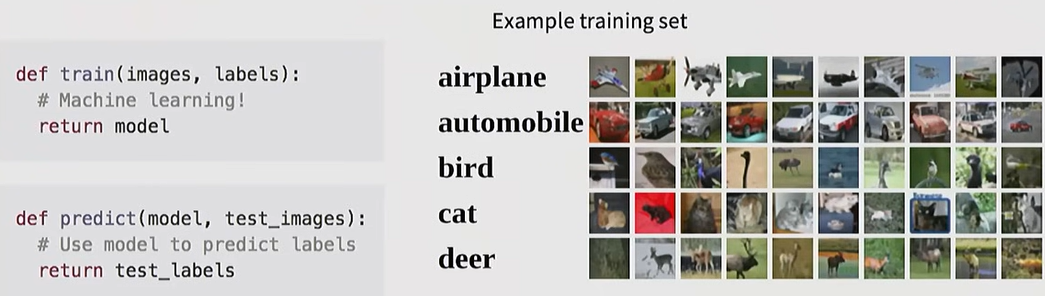

2. Data-driven Approach

3 Step Process

- Collect a dataset of images and labels

- Use ML algorithms to train a classifier

- Evaluate the classifier on new images

3. KNN (K-Nearest Neighbor) Algorithm

First classifier: Nearest Neighbor

-memorize all data and labels

def train(images, labels):

#ML!

retrun model

-Predict the label of the most similar training image

def predict(model, test_images):

#Use model to predict labels

return test_labels

Distance Functions

L1 (Manhatten) distance

-pixel-wise absolute value difference

L2 (Euclidean) distance

Hyperparameters

choices about the algorithms themselves

e.g. distance metric, K

Choose hyperparameters using the validation set

Only run on the test set once at the very end.

Setting Hyperparamters

Idea1. Choose hyperparameters tha work best on the training data

Idea2. Choose hyperparameters tha work best on test data

Idea3. Spilt data into train. val; choose hyperparameters on val and evaluate on test <- better idea

Idea4. Cross-validation: Split data into folds, try each fold as validation and average the results

Training is very fast O(1), but because it must compare with all data during prediction, the test speed is very slow O(N). Furthermore, distance calculation at the pixel level does not well reflect the semantic similarity of images.

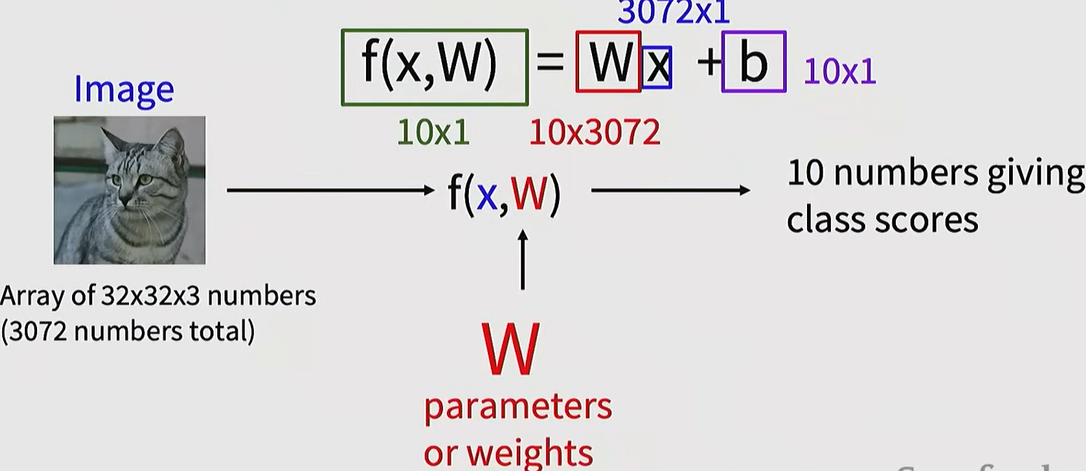

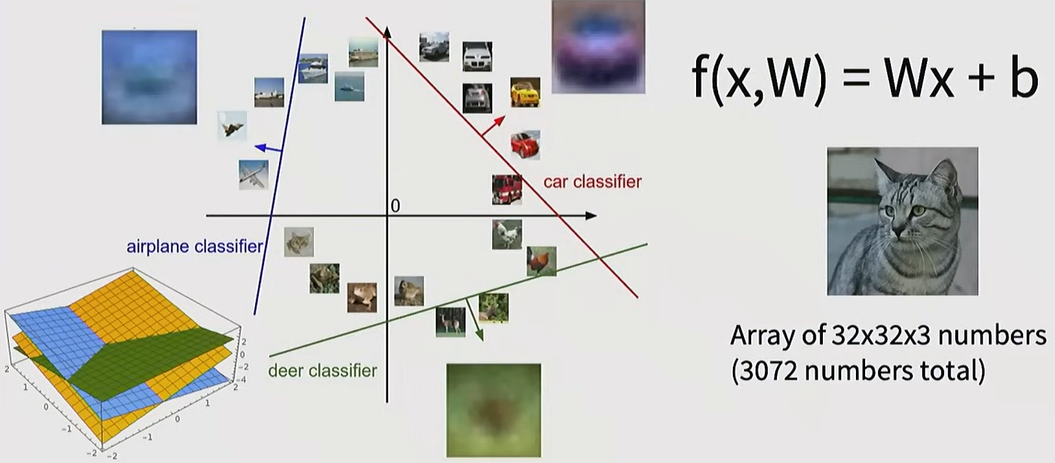

4. Linear Classifier

A parameter-based approach that maps the input image ($x$) to output class scores.

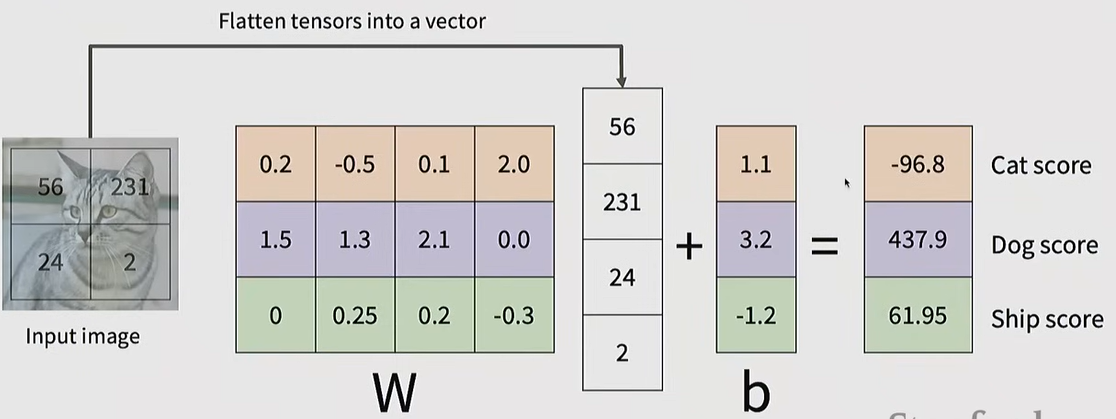

f(x,W)=Wx+b

W (Weights): weight parameters that must be found through the learning process.

b (Bias): bias values that adjust the preference for specific classes.

3 Perspective

1. Algebraic Perspective

Class scores are calculated through matirx multiplication

Input image -> vector

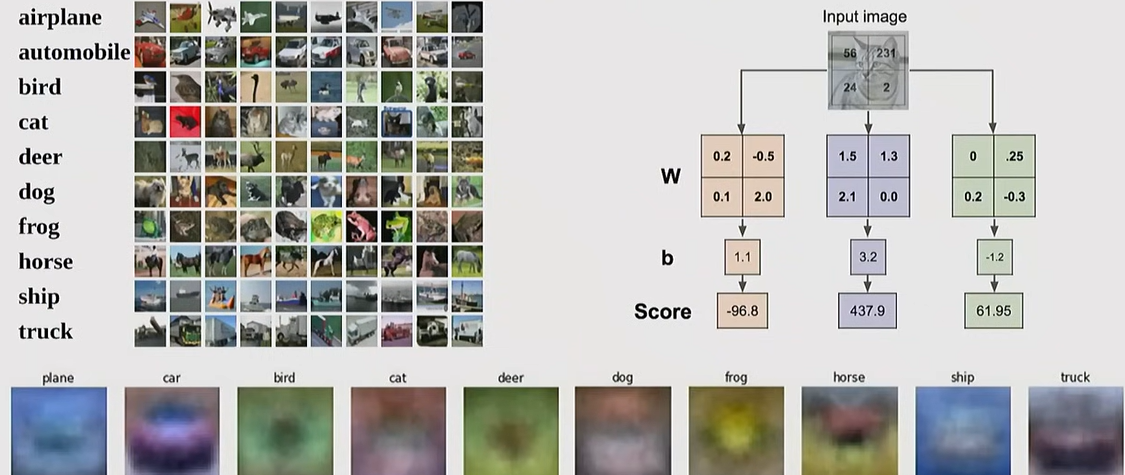

2. Visual Perspective

Each row of the weight marix W acts as a template for its corresponding class

3. Geometric Perspective

It is equivalent to drawing decision boundaries (hyperplanes) that separate each class in a high-dimensional space

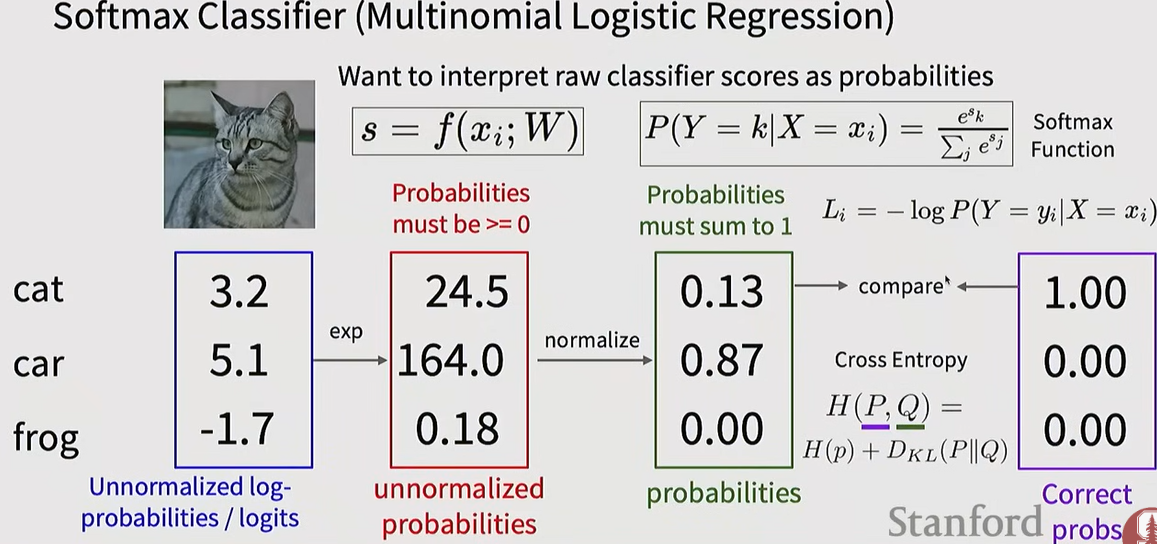

5. Loss Function and Softmax

Loss Function

A metric that quantifies how poorly the current classifier is performing (often referred to as "Unhappiness")

Softmax Classfier

It applies an exponential function to the output values (Logits) of a linear classifier and normalizes them into probability values between 0 and 1.

Cross-Entropy Loss

he goal is to minimize the negative log-likelihood of the probability corresponding to the correct class -log(P). This is equivalent to maximizing the probability of the correct class.

'MMAILab' 카테고리의 다른 글

| [DL for CV] Training CNNs and CNN Architectures (0) | 2026.03.05 |

|---|---|

| [DL for CV] Image Classification with CNNs (0) | 2026.03.05 |

| [DL for CV] Neural Networks & Backpropagation (0) | 2026.03.04 |

| [DL for CV] Regularization & Optimization (0) | 2026.03.04 |

| [DL for CV] Introduction (1) | 2026.03.04 |